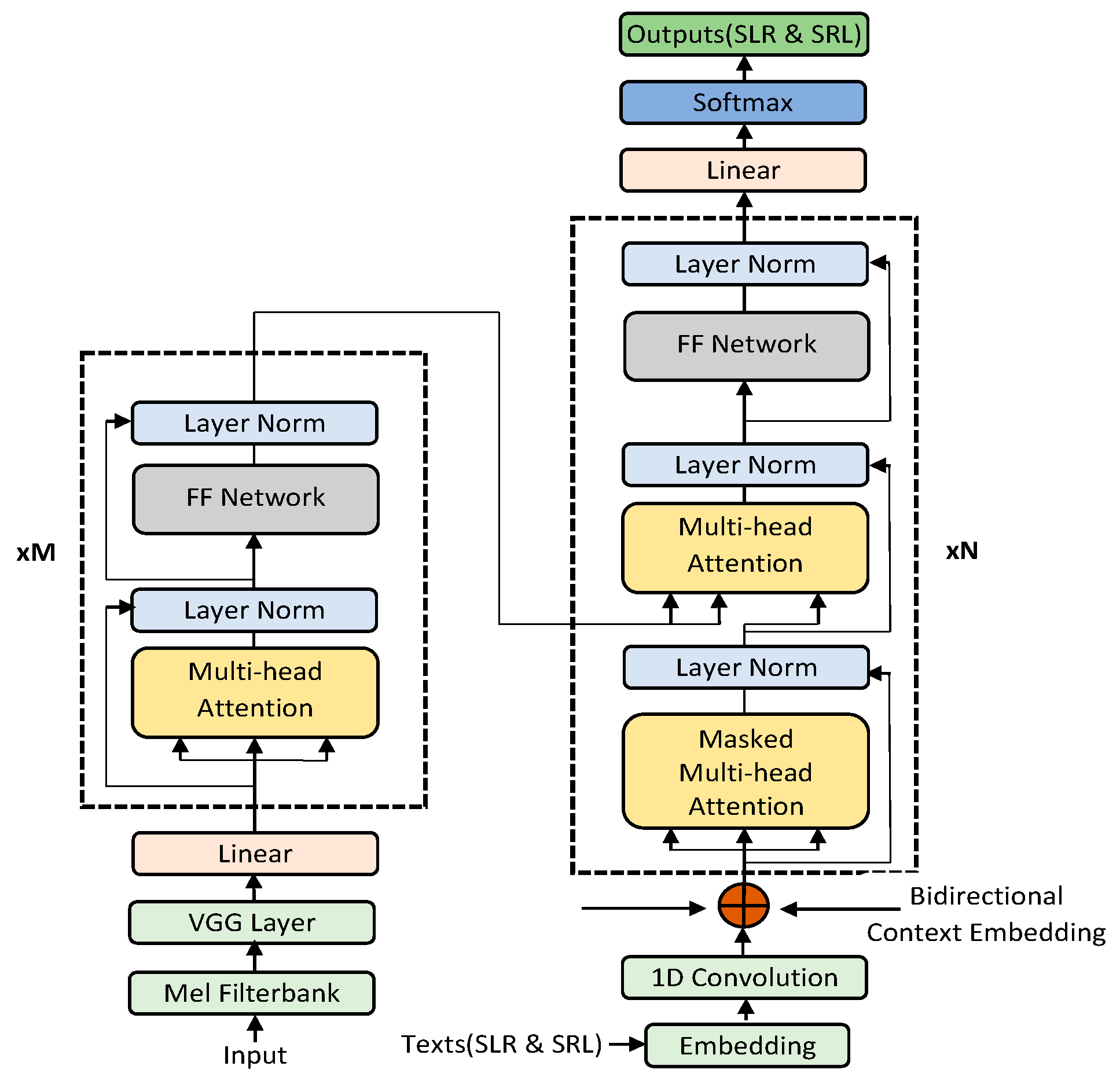

Information | Free Full-Text | A Bidirectional Context Embedding Transformer for Automatic Speech Recognition

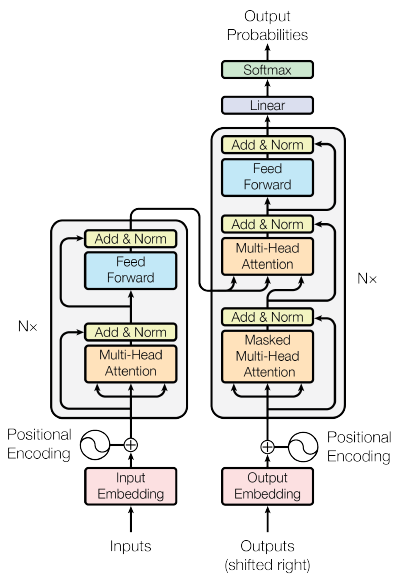

BERT — Bidirectional Encoder Representation from Transformers: Pioneering Wonderful Large-Scale Pre-Trained Language Model Boom - KiKaBeN

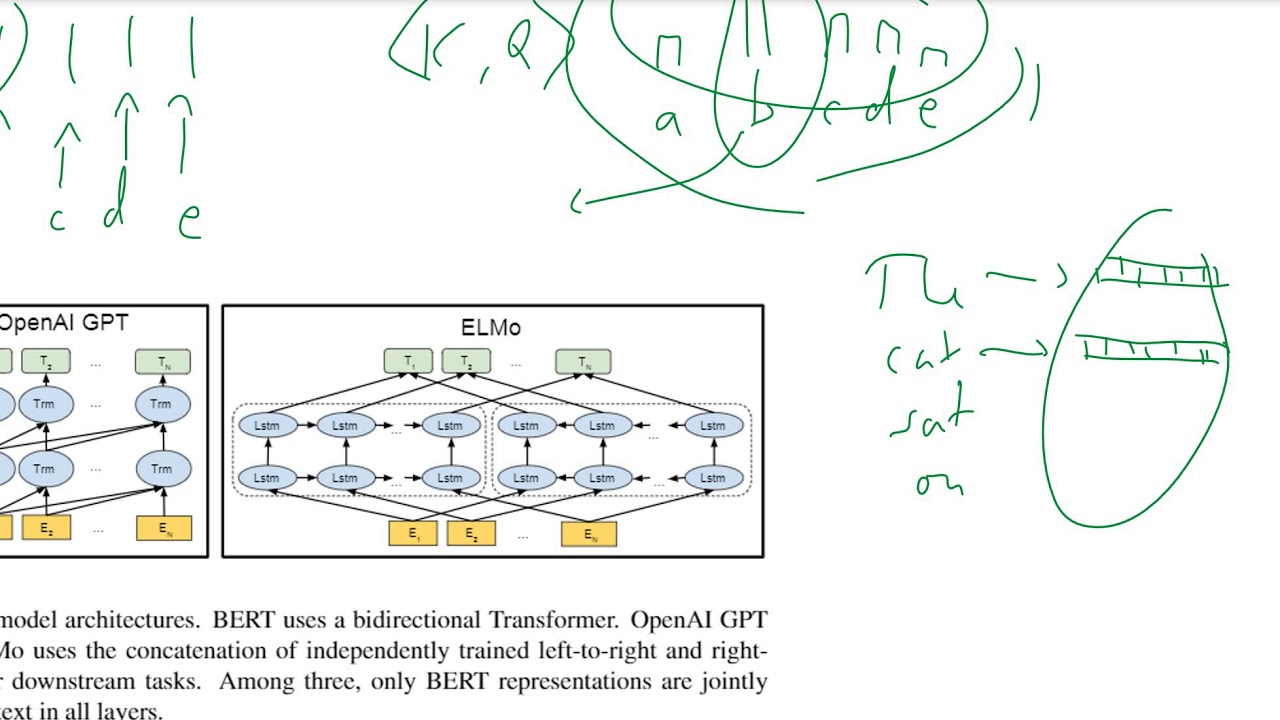

![Paper Review] BERT(2018), Pre-training of Deep Bidirectional Transformers for Language Understanding Paper Review] BERT(2018), Pre-training of Deep Bidirectional Transformers for Language Understanding](https://blog.kakaocdn.net/dn/ABKAZ/btragLlRgTb/FVmlQHt8XJoRxsvHi0MpM0/img.png)

Paper Review] BERT(2018), Pre-training of Deep Bidirectional Transformers for Language Understanding

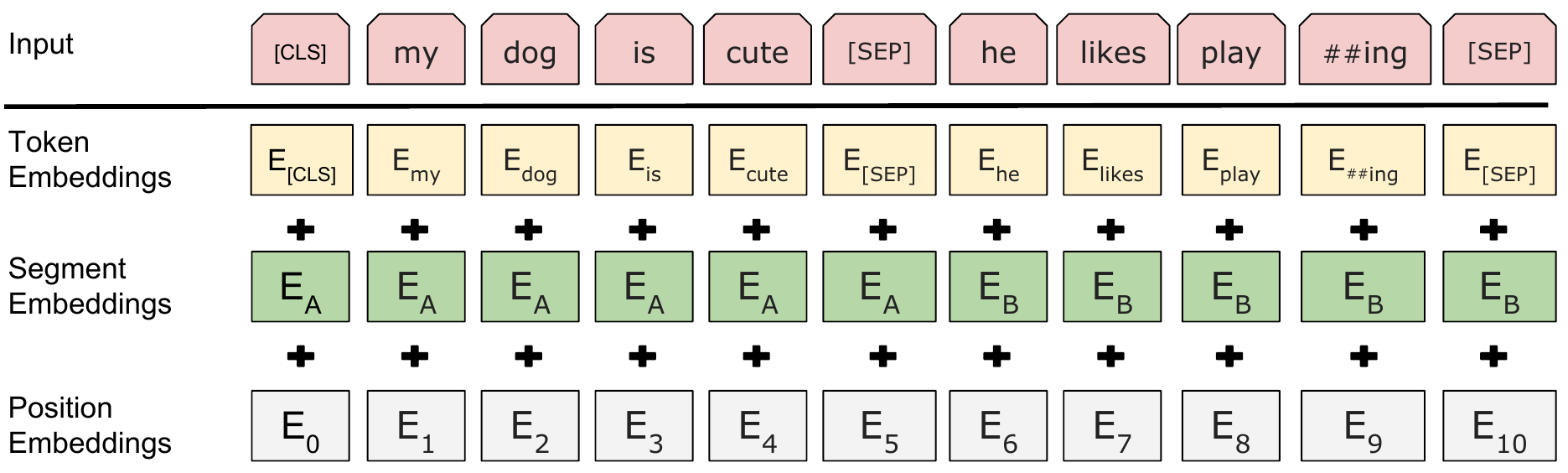

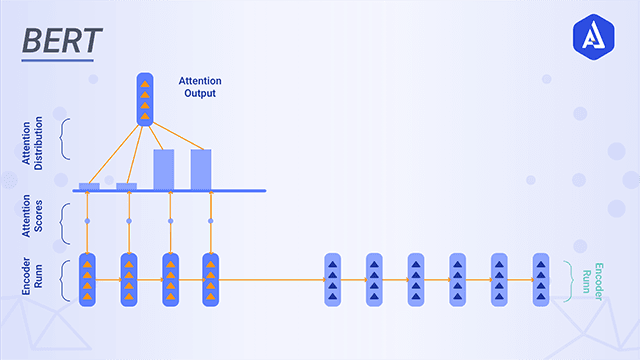

Intuitive Explanation of BERT- Bidirectional Transformers for NLP | by Renu Khandelwal | Towards Data Science

Intuitive Explanation of BERT- Bidirectional Transformers for NLP | by Renu Khandelwal | Towards Data Science

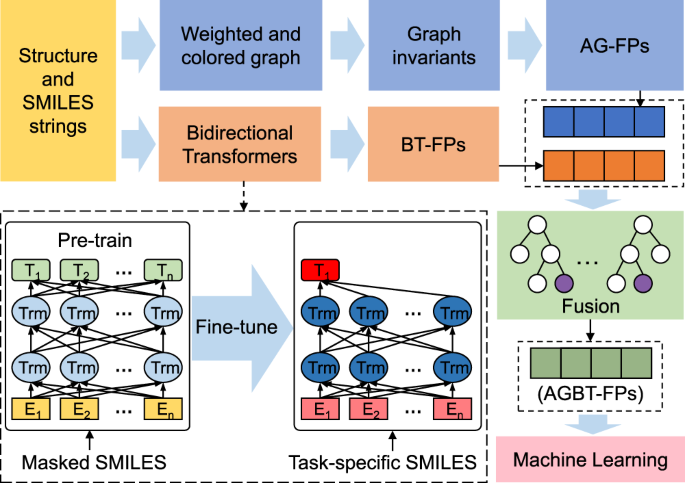

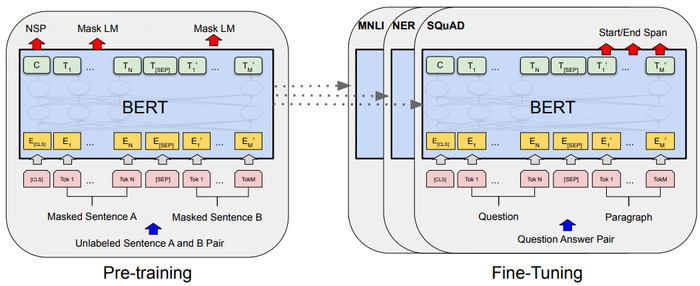

An overview of Bidirectional Encoder Representations from Transformers... | Download Scientific Diagram

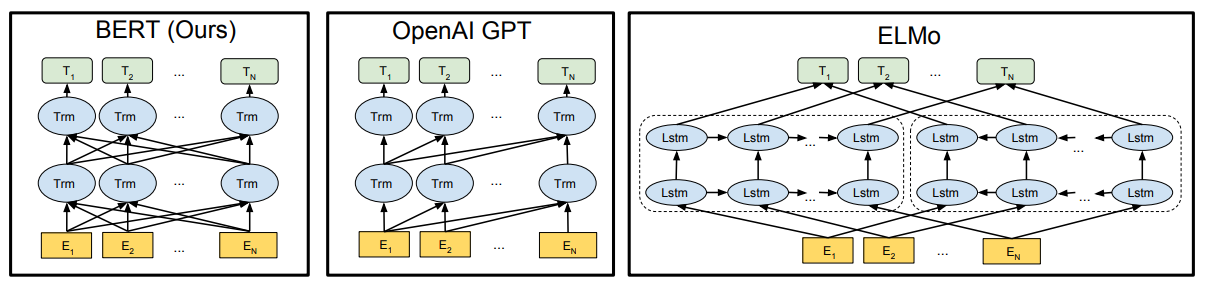

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding · Issue #114 · kweonwooj/papers · GitHub

STAT946F20/BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding - statwiki

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding on ShortScience.org